Micron’s latest update on its 3GB (24Gb) GDDR7 memory modules may look like a small specification change on paper. In reality, it represents a meaningful shift in how GPUs can be designed—especially in the mid-range market where VRAM limitations have become increasingly visible.

For years, GPU performance discussions have centered on compute power. But in 2026, memory capacity and bandwidth are emerging as the real bottlenecks. Micron’s move to 3GB modules offers a new way forward.

The Problem: A VRAM Bottleneck in Modern GPUs

GPU manufacturers have been constrained by memory configurations that don’t scale cleanly. On common bus widths like 128-bit or 192-bit, memory capacity typically lands in awkward tiers:

- 8GB (entry-level baseline)

- 16GB (higher-end configurations)

There has been little flexibility between those tiers without increasing cost or complexity.

To bridge that gap, manufacturers have historically relied on:

- Higher-density chips (4GB per module) – expensive and not widely deployed

- Clamshell memory layouts – placing memory on both sides of the PCB, increasing heat and manufacturing cost

Neither solution has scaled well for mainstream GPUs. At the same time, rising memory costs and supply constraints have made it harder to justify larger VRAM configurations in mid-range cards. As we’ve covered in our analysis of rising memory prices and their impact on gaming hardware, the industry has been under increasing pressure to deliver more capacity without dramatically increasing cost.

The Shift: Why 3GB Modules Matter

Micron’s 3GB GDDR7 modules introduce a new middle ground. Instead of jumping from 8GB to 16GB, GPU designers can now build more balanced configurations.

For example:

- 128-bit bus → 12GB VRAM

- 192-bit bus → 18GB VRAM

- 256-bit bus → 24GB VRAM

This flexibility is critical. It allows manufacturers to better match memory capacity with real-world workload demands without overbuilding the entire GPU.

GDDR7: More Than Just Capacity

The transition to GDDR7 is not only about memory size. It also introduces fundamental changes in how data is transmitted.

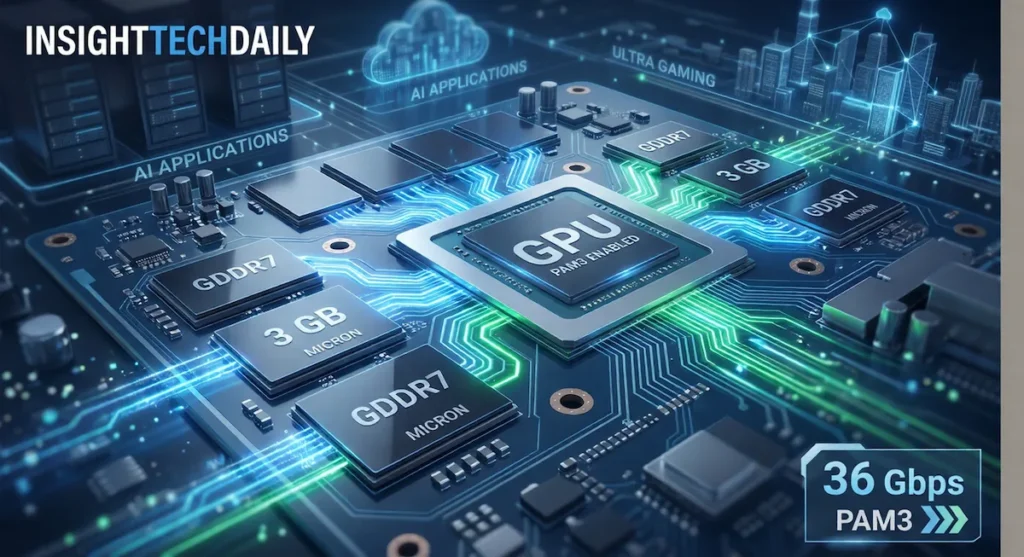

PAM3 Signaling

GDDR7 uses Pulse Amplitude Modulation 3 (PAM3), allowing it to transmit 1.5 bits per cycle compared to 1 bit with traditional NRZ signaling used in GDDR6. This improves bandwidth efficiency without requiring a proportional increase in clock speed.

Higher Data Rates

Micron’s GDDR7 modules are targeting speeds up to 36 Gbps, representing a significant increase over high-end GDDR6 and GDDR6X implementations.

Improved Efficiency

Built on Micron’s advanced DRAM process node, GDDR7 is designed to deliver improved power efficiency. For laptops and compact desktops, this could translate into better sustained performance and reduced thermal constraints.

For readers trying to understand how these improvements translate into real-world performance, it helps to look at how memory speed, latency, and capacity interact. We break this down in detail in our guide to DDR5 memory and what actually matters for performance, many of the same principles now apply to GPU memory as well.

What This Means for Gaming

Modern games are increasingly VRAM-intensive, especially at 1440p and above. Titles like Cyberpunk 2077 and Alan Wake 2 can exceed 8GB of VRAM when using high-resolution textures and ray tracing.

This has created a recurring issue for mid-range GPUs: strong compute performance paired with insufficient memory.

A shift to 12GB or 18GB configurations could:

- Reduce texture streaming and stuttering

- Improve frame-time consistency

- Extend the usable lifespan of mid-range GPUs

In practical terms, this could make “Ultra settings” more viable on hardware that previously struggled with memory limitations.

What This Means for Local AI

For AI workloads, VRAM capacity is often the limiting factor—not raw compute power.

Applications such as:

- Local large language models (LLMs)

- Stable Diffusion image generation

- AI-assisted creative tools

all scale directly with available memory.

With 3GB modules enabling 12GB, 18GB, or even 24GB configurations on more accessible hardware, local AI workloads become significantly more practical outside of high-end systems.

The Bigger Picture: A Memory-First Era

The introduction of 3GB GDDR7 modules reflects a broader shift in the industry. Performance is no longer defined purely by compute throughput. Instead, memory capacity, bandwidth, and efficiency are becoming equally important.

This trend is visible across:

- Gaming workloads that rely on large asset streaming

- AI applications that require large model footprints

- Hybrid workloads that combine both

In this context, Micron’s move is less about incremental improvement and more about enabling new GPU design strategies.

What to Watch Next

The real impact of 3GB GDDR7 modules will depend on adoption.

Key questions include:

- Will NVIDIA and AMD widely adopt 3GB modules in mid-range GPUs?

- Will pricing remain competitive compared to existing GDDR6 designs?

- How quickly will software scale to take advantage of increased VRAM?

With next-generation GPUs on the horizon, these answers may arrive sooner rather than later.

Final Take

Micron’s 3GB GDDR7 modules may not grab headlines in the same way a new GPU architecture does, but their impact could be just as important.

By enabling more flexible VRAM configurations, they address a long-standing limitation in GPU design. For gamers, that could mean smoother performance. For AI users, it could mean access to workloads that were previously out of reach.

The industry is moving beyond raw compute as the only metric that matters. Memory is now part of the performance equation—and Micron just changed how that equation can be solved.