For years, GPU comparisons have been driven by one easy-to-market number: raw compute. More TFLOPS, more hype, more performance headlines. But local AI is changing that equation. As large language models move onto desktops and workstations, the real bottleneck is increasingly not raw compute at all. It is memory.

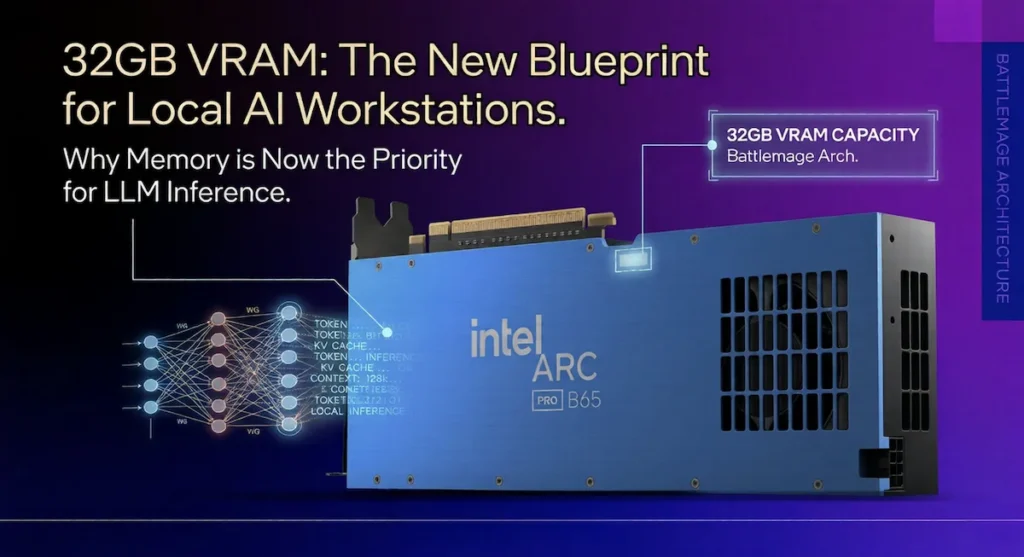

That is what makes Intel’s Arc Pro B65 so interesting. Built around Intel’s emerging Battlemage-generation architecture, the card’s standout feature is not a flashy gaming angle or a halo-class compute claim. It is the simple fact that it reportedly brings 32GB of VRAM to a segment where many consumer GPUs still force compromises once LLM workloads grow beyond smaller models and shorter context windows.

For local inference, document analysis, coding assistants, retrieval-augmented generation, and on-prem AI experiments, that memory pool could matter more than many buyers expect. In a market still obsessed with peak throughput, Intel may be pushing a different idea: that for AI work, having enough memory to keep the whole job on the GPU can matter more than having the fastest silicon on paper.

Why VRAM Matters More Than Ever for Local AI

Modern LLM workloads are brutally memory-sensitive. Running models locally is not just about storing weights. GPU memory also has to handle intermediate activations, KV cache growth, and the extra footprint that comes with longer prompts, larger context windows, and less aggressive quantization.

That is why many otherwise powerful consumer GPUs start to feel cramped once users move beyond lightweight chatbot demos. A card can have impressive theoretical compute performance and still struggle in real-world AI use if it runs out of VRAM and starts leaning on system RAM, model splitting, or heavy precision tradeoffs.

In practical terms, the rough experience tiers look something like this:

- 12GB VRAM: Often workable for smaller models around the 7B class, but usually with tighter limits on context length and precision.

- 16GB VRAM: More flexible, but still restrictive once larger models or extended contexts enter the picture.

- 24GB VRAM: A far more comfortable zone for mid-sized local AI workloads and larger quantized models.

- 32GB VRAM: A meaningful step up for users who want higher-precision inference, longer contexts, and fewer workflow compromises.

That last category is where the Arc Pro B65 becomes more than just another spec-sheet curiosity. In AI workloads, 32GB is not a luxury number. It can be the difference between “this technically runs” and “this runs well enough to actually use.”

Context Windows Are Becoming a Bigger Deal

One of the least appreciated parts of the local AI shift is how important context capacity has become. It is no longer enough to run a model at all. Increasingly, the goal is to run it with enough memory headroom to handle longer documents, larger codebases, richer retrieval pipelines, or agent-like workflows without constant truncation.

A bigger VRAM pool can help enable:

- Longer token windows without hard compromises

- Less CPU offloading and the latency penalties that come with it

- More stable inference during sustained workloads

- Less reliance on aggressively low-bit quantization

For developers and researchers, this matters in very practical ways. A 32GB GPU can make multi-file code review, large document summarization, and extended RAG sessions far more usable on a single workstation. That does not automatically make Intel faster than NVIDIA, but it does create a real functional advantage in the kinds of tasks many local AI users now care about most.

Arc Pro B65 vs NVIDIA RTX 5000 Series

This is where Intel’s pitch starts to stand apart. NVIDIA still holds the stronger software ecosystem and the higher raw compute ceiling, but the Arc Pro B65 appears to be targeting a different weakness in today’s GPU market: consumer cards still do not always scale VRAM as aggressively as local AI users would like.

Even in the RTX 5000 era, memory capacity remains one of the clearest dividing lines between consumer-oriented GPUs and cards that feel better suited for serious workstation AI use.

| Feature | Arc Pro B65 (32GB) | RTX 5070 / 5080 | RTX 5090 |

|---|---|---|---|

| VRAM | 32GB | More limited than B65 in typical consumer tiers | Higher-end, but still priced as a halo-class option |

| Compute (TFLOPS) | Lower (expected) | Higher | Highest |

| Context Capacity for Local AI | Very strong on paper | Moderate | Strong, but costly |

| Primary Positioning | Pro / AI workstation | Consumer high-end | Enthusiast / halo |

That comparison matters because many local AI builders are not chasing the absolute fastest GPU available. They are trying to find the point where a single machine can run useful models locally without becoming a money pit. In that kind of buying decision, memory capacity can become the deciding factor faster than benchmark charts suggest.

Even as NVIDIA moves into the RTX 5000 generation, VRAM scaling in consumer cards still does not necessarily line up with the needs of local AI users. That creates a potential opening for Intel. A lower-compute, memory-heavy GPU can make more sense than a faster consumer card if the faster card is forced into offloading, model trimming, or tighter context limits.

Where Intel Could Actually Gain Ground

Intel does not need to beat NVIDIA everywhere for the Arc Pro B65 to matter. It only needs to win in enough real-world AI scenarios to justify its existence.

1. Larger Models on One Card

A common pain point with consumer GPUs is that once model size climbs, users start making ugly compromises. They split workloads, accept slower offloading behavior, or drop to more aggressive quantization than they would prefer.

If the B65 can keep larger models fully resident in local VRAM, that alone could make it attractive for workstation users who care more about practicality than peak benchmark bragging rights.

2. Better Headroom for Higher Precision

Cards with smaller VRAM pools often push users toward 4-bit or 8-bit quantization earlier than ideal. Those approaches can be extremely useful, but they are still tradeoffs. More memory creates more room for mixed precision or higher-quality inference settings that preserve model behavior better.

That will not matter equally for every workload, but for users building tools around local reasoning, coding, document analysis, or enterprise knowledge tasks, output quality can matter just as much as raw speed.

3. Longer Context Workloads

Long prompts and large retrieval pipelines are becoming normal. Whether the use case is contract review, research summarization, or codebase assistance, the ability to keep more of the session in GPU memory has a direct effect on usability.

This is one area where a 32GB card could punch above its weight. Even if the B65 trails consumer NVIDIA cards in peak throughput, it could still feel more capable in long-context scenarios where memory limits become the first wall users hit.

Why Battlemage Matters Beyond Gaming

Intel’s next-generation Battlemage architecture is often discussed through a gaming lens, but the Arc Pro B65 suggests a more interesting angle. Instead of just chasing mainstream gaming relevance, Intel appears positioned to serve a growing class of buyers who care about AI utility on the desktop.

That does not mean architecture details stop mattering. In fact, they matter a great deal.

Memory Bandwidth Still Matters

VRAM size alone is not enough. If the memory subsystem cannot feed the GPU efficiently, then headline capacity can look better on paper than it does in practice. A 32GB card still needs sufficient bandwidth and low enough latency to avoid turning into a memory-rich but throughput-limited compromise.

That is one reason benchmarks will matter so much once the card is broadly tested. Capacity is the attention-grabber, but memory performance is what determines whether that capacity feels genuinely useful.

Software Support Will Make or Break It

The largest challenge for Intel is not the hardware headline. It is the ecosystem.

NVIDIA’s CUDA stack remains the default path for a huge share of AI development. Intel has improved its position with oneAPI, OpenVINO, and growing framework support, but buyers will still care about practical questions:

- How easily does it work with common PyTorch-based workflows?

- How well does it integrate with llama.cpp and local inference tools?

- How reliable is the driver stack under long-running AI loads?

If Intel reduces friction enough, the B65 could become a compelling “good enough plus more memory” option. If compatibility remains patchy, its VRAM advantage risks being stranded behind workflow headaches.

Driver Maturity Is Not Optional

Professional buyers are usually less forgiving than hobbyists when it comes to stability. Crashes, odd behavior under sustained inference, or weak optimization support can cancel out an otherwise strong hardware value proposition.

That is why the B65’s real opportunity is not just about launching with 32GB. It is about proving that Intel can translate that memory advantage into a dependable workstation experience.

Why This Could Matter for Real Buyers

For a certain class of user, the Arc Pro B65 could hit a very attractive middle ground.

It may appeal to:

- Developers building local AI prototypes

- Researchers who want more model headroom without enterprise GPU prices

- Small teams experimenting with on-prem inference

- Privacy-sensitive organizations that want less cloud dependence

That is an increasingly important niche. Not every AI buyer wants to rent cloud GPUs forever, and not every team can justify datacenter-class accelerators. There is a growing market for hardware that is more capable than a consumer GPU, but less financially brutal than true enterprise silicon.

If Intel prices the B65 intelligently, it could serve as a bridge product between those two worlds.

The Bigger Story: AI Is Changing What “GPU Value” Means

The most interesting part of the Arc Pro B65 may be what it says about the broader market. For years, the GPU value conversation was dominated by gaming frame rates and compute headlines. Local AI is starting to force a different discussion.

Suddenly, buyers are asking:

- Can this card hold a larger model without offloading?

- Can it handle longer contexts without collapsing in performance?

- Can it make a single workstation viable for serious AI experimentation?

Those are not fringe questions anymore. They are becoming central to how advanced users evaluate desktop hardware.

That is why the Arc Pro B65 is worth watching even if it never matches NVIDIA’s raw performance leadership. It represents a different way of thinking about AI hardware value. In a market where consumer GPUs often still feel memory-constrained, a 32GB card aimed at workstation users could matter far more than its TFLOPS number suggests.

What Is Confirmed vs. What Still Needs Proof

There is a promising thesis here, but there are still important open questions.

What appears clear so far:

- The Arc Pro B65 is positioned around a 32GB VRAM configuration

- Intel is targeting professional and AI-adjacent workloads

- The card is tied to Intel’s Battlemage-generation strategy

What still matters before drawing final conclusions:

- Real-world LLM and inference benchmarks

- Actual memory bandwidth and architectural balance

- Pricing relative to NVIDIA alternatives

- Driver maturity and software compatibility

That last list is what will determine whether the B65 becomes a genuine AI workstation disruptor or simply an interesting spec sheet.

Looking at upgrading your system for AI workloads or general performance? These recent breakdowns explore how current CPU platforms and pricing trends could impact your next build:

- Intel’s Raptor Lake Strategy: Why DDR4 Still Matters for Value Builds in 2026

- AMD vs Intel Mid-Range CPU Value War: How Pricing and Core Counts Are Shifting the Market

While GPUs often dominate AI discussions, CPU platform longevity, memory compatibility, and overall system cost still play a critical role in building a balanced local AI workstation.

The Bottom Line

Intel’s Arc Pro B65 stands out because it targets a problem that many GPU launches still downplay: local AI workloads are often constrained more by memory than by raw compute. A 32GB VRAM configuration will not automatically make Intel the new leader in desktop AI, but it could make the card unexpectedly attractive for users who need larger models, longer contexts, and a more practical single-workstation setup.

In that sense, the Arc Pro B65 is not just another GPU launch story. It may be an early sign that the AI era is rewriting the rules of what desktop buyers should prioritize. And if that shift continues, memory-heavy GPUs could start looking a lot smarter than headline-chasing benchmark monsters.