AMD is expanding its workstation graphics lineup with the Radeon AI Pro R9700 and Radeon AI Pro R9600, two RDNA 4-based professional GPUs aimed at local AI workloads, inference, creators, researchers, and compact workstation or server deployments.

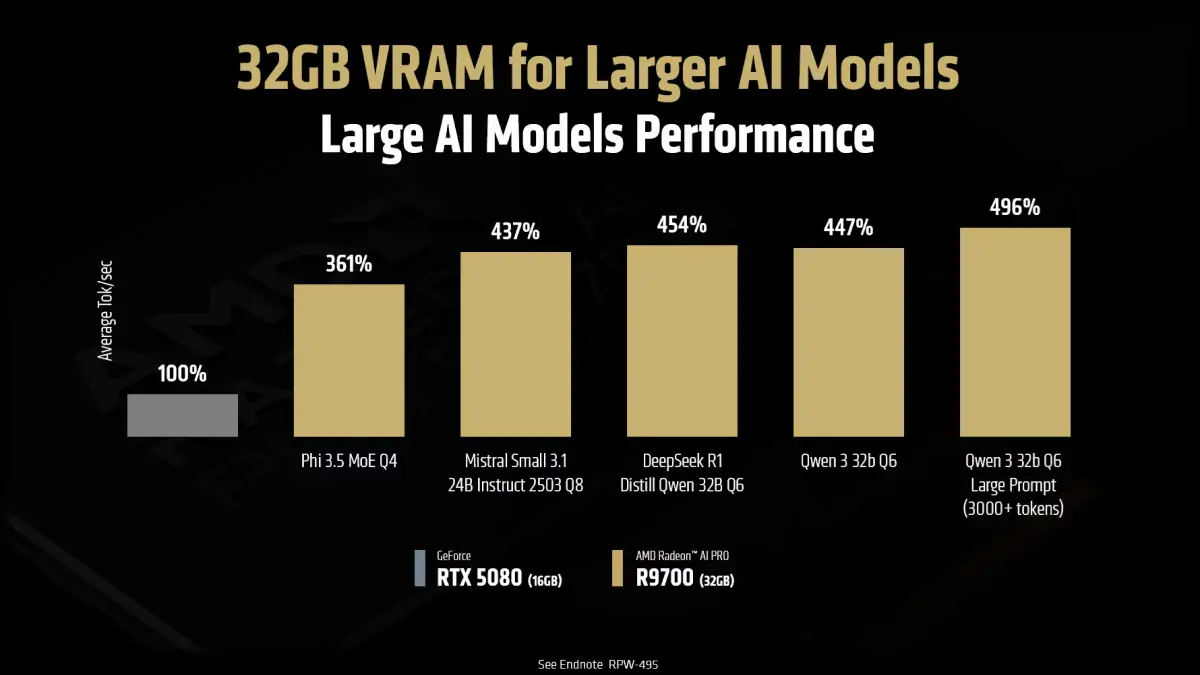

The headline feature is simple but important: 32GB of GDDR6 VRAM. For local AI builders, that memory capacity may matter more than almost anything else on the spec sheet. Larger models, bigger context windows, image-generation workflows, and AI development tools can quickly run into memory limits on mainstream consumer GPUs.

With the Radeon AI Pro R9700 and R9600, AMD is trying to create a more accessible workstation-class path for users who need more VRAM than a typical gaming GPU offers, but do not necessarily want to jump straight into data-center accelerator pricing.

AMD’s Radeon AI Pro R9700 and R9600 are not just graphics cards with larger memory buffers. They show how local AI hardware is becoming its own category, where VRAM capacity, software support, cooling design, and workstation deployment matter as much as gaming performance.

The Big Feature: 32GB of GDDR6 VRAM

The most important number across AMD’s new Radeon AI Pro cards is 32GB.

For gaming, VRAM is often discussed in terms of texture quality, resolution, and future-proofing. For local AI, the conversation is more direct. If a model or workload does not fit comfortably into GPU memory, performance can drop sharply or the workload may not run in a practical way at all.

That is why AMD is putting so much emphasis on high-capacity local AI. The Radeon AI Pro R9700 and R9600 both use 32GB of GDDR6 memory on a 256-bit memory interface, with listed memory bandwidth up to 640GB/s.

That gives developers and builders more room to experiment with local LLMs, text-to-image models, AI coding assistants, retrieval workflows, and creator tools without immediately relying on cloud GPUs.

Radeon AI Pro R9700: The Flagship Local AI Workstation Card

The Radeon AI Pro R9700 is the higher-performance option in AMD’s new AI Pro stack.

It is built on AMD’s RDNA 4 architecture and features 64 compute units, 128 AI accelerators, and 4096 stream processors. AMD lists peak FP32 performance at up to 47.8 TFLOPs, positioning the card for AI development, local inference, workstation graphics, and creator workloads.

For local AI users, though, the performance number is only part of the story. The R9700’s 32GB memory buffer is what gives it a clear role in the market. It is designed for people who want a dedicated workstation GPU that can handle larger local models than many mainstream cards, while also remaining useful for professional visualization and creative applications.

Radeon AI Pro R9700 Key Specs

- Architecture: AMD RDNA 4

- Compute Units: 64

- AI Accelerators: 128

- Stream Processors: 4096

- Memory: 32GB GDDR6

- Memory Interface: 256-bit

- Peak Memory Bandwidth: Up to 640GB/s

- Peak FP32 Performance: Up to 47.8 TFLOPs

- Target Workloads: Local AI, workstation graphics, inference, development, and creator workflows

That makes the R9700 the card to watch for users who want a stronger local AI workstation build, especially if they are trying to move beyond the limits of 12GB, 16GB, or even some 24GB GPUs.

Radeon AI Pro R9600 and R9600D: 32GB VRAM in a Lower-Power Package

The Radeon AI Pro R9600 and R9600D take a different approach. These cards keep the same key memory story — 32GB of GDDR6 VRAM — but reduce the compute configuration compared with the R9700.

AMD lists the R9600-class cards with 48 compute units and 3072 stream processors. The R9600D also carries a lower 150W total board power, which could make it attractive for efficient workstations, inference servers, edge AI systems, and rack-style deployments.

The R9600D is especially interesting because partner models use passive cooling designs. That does not mean the card is meant to sit in a normal desktop case with no airflow. Passive professional GPUs usually depend on strong chassis airflow, making them better suited for servers, workstations, or rackmount systems designed around front-to-back cooling.

Radeon AI Pro R9600 / R9600D Key Specs

- Architecture: AMD RDNA 4

- Compute Units: 48

- Stream Processors: 3072

- Memory: 32GB GDDR6

- Memory Interface: 256-bit

- Peak Memory Bandwidth: Up to 640GB/s

- R9600D Board Power: 150W

- Target Workloads: AI inference, edge systems, compact workstations, rack deployments, and memory-heavy local AI tasks

This makes the R9600 lineup less of a pure performance play and more of a practical deployment option. For some AI workloads, a slightly slower GPU with a large memory buffer can still be more useful than a faster GPU with less VRAM.

The R9600 may end up being the more practical card for some local AI users. It keeps the 32GB memory target but lowers power and complexity, which matters for servers, edge systems, and always-on inference boxes.

Why AMD Is Targeting Local AI

The local AI market is changing quickly.

Developers, creators, small businesses, researchers, and hobbyists are increasingly experimenting with models that can run on their own hardware instead of relying entirely on cloud services. That can reduce recurring costs, improve privacy, lower latency, and give users more control over their workflows.

But local AI also exposes a hardware problem. Many consumer GPUs are fast enough for some AI tasks, but their memory capacity can become a hard limit. Once the workload grows beyond the available VRAM, the experience can get slower, more complicated, or impractical.

AMD’s Radeon AI Pro lineup is designed to address that middle ground. These are not consumer gaming cards, and they are not massive data-center accelerators. They sit in between: workstation-class GPUs with enough memory to make local AI more realistic for a wider set of users.

ROCm and Software Support Are Part of the Pitch

AMD is also tying the Radeon AI Pro R9700 to ROCm, its software platform for GPU compute and AI workloads.

That matters because AI hardware is only useful if the software stack can support the tools people actually use. For local AI users, driver support, framework compatibility, PyTorch setup, operating system support, and model tooling can matter just as much as raw GPU specs.

Nvidia still has a major advantage with CUDA in many AI workflows, but AMD is clearly trying to make ROCm a more practical path for local AI developers and workstation users. The Radeon AI Pro cards are part of that push.

For buyers, the practical advice is simple: before choosing any AI GPU, check the specific software you plan to run. A card may look excellent on paper, but the real value depends on whether your inference tools, model formats, and operating system are well supported.

AMD’s own positioning makes the memory argument clear: the Radeon AI Pro R9700 is not being pitched only as a faster workstation GPU, but as a 32GB VRAM card for larger local AI models and heavier inference workloads.

Who These Cards Are For

The Radeon AI Pro R9700 and R9600 are not aimed at one single type of buyer. They could make sense for several groups.

Local AI Developers

Developers experimenting with LLMs, coding assistants, retrieval-augmented generation, and agentic workflows may benefit from having a 32GB GPU in a local workstation. The extra VRAM gives more flexibility when testing larger models or running multiple AI components together.

Creators and Technical Professionals

Creators who use GPU-accelerated tools may find the R9700 especially interesting because it combines workstation graphics capabilities with a large memory pool. That could make it useful for mixed workflows involving rendering, AI image generation, video tools, and professional visualization.

Small Businesses and Labs

Small teams that want to experiment with private AI tools may prefer local hardware over renting cloud GPUs for every test. A 32GB workstation GPU can become a shared development box, internal inference system, or prototype machine.

Edge AI and Server Builders

The R9600D is more specialized, but that is part of its appeal. Its lower-power target and passive-style deployment options may fit systems where AI inference needs to happen close to the data, inside a server, or in an industrial environment.

The Practical Caution: Cooling, Drivers, and Real Workloads

The Radeon AI Pro R9700 and R9600 look promising on paper, but buyers should still think carefully about deployment.

First, cooling design matters. A blower-style workstation card can make sense in a desktop workstation or multi-GPU system. A passive card can make sense in a server chassis with strong airflow. But putting a passive card into the wrong case can create thermal problems.

Second, software support matters. Local AI users should confirm ROCm compatibility, operating system support, driver maturity, and application support before buying.

Third, VRAM is important, but it is not magic. A 32GB card expands what users can do locally, but it does not turn a desktop workstation into a large AI training cluster. These cards are best understood as local inference, development, and workstation AI products rather than replacements for high-end data-center systems.

Why This Matters for InsightTechDaily Readers

The bigger story is that AI hardware is becoming more specialized for local users.

For a long time, anyone experimenting with local AI had to make awkward choices. Consumer GPUs were accessible but often memory-limited. Enterprise accelerators had the memory, but the pricing and deployment requirements could be out of reach. AMD’s Radeon AI Pro R9700 and R9600 suggest that the middle of the market is starting to fill in.

That does not mean these cards are cheap impulse buys. It means the product category is becoming more serious. Local AI is no longer just a hobbyist experiment running on whatever gaming GPU happens to be available. It is becoming a workstation market with products designed around AI memory requirements, software stacks, and professional deployments.

The most important takeaway is not that AMD has one new AI GPU. It is that 32GB local AI cards are becoming a defined workstation category. That gives developers and builders more options between mainstream gaming GPUs and expensive data-center accelerators.

Bottom Line

AMD’s Radeon AI Pro R9700 and R9600 bring a clearer local AI focus to the company’s workstation GPU lineup.

The R9700 is the stronger option, with more compute resources and a clear role as a high-capacity local AI workstation card. The R9600 and R9600D keep the 32GB VRAM story but shift toward lower-power, more deployment-friendly systems.

For local AI users, the appeal is straightforward: more memory, workstation-class positioning, and a path into AMD’s ROCm ecosystem. For developers and builders who have been running into the VRAM wall, these cards are worth watching closely.

The local AI hardware race is no longer just about the fastest GPU. It is about which platform gives builders the right mix of memory, software support, power, price, and deployment flexibility. AMD’s Radeon AI Pro R9700 and R9600 are another sign that this market is becoming real.