AI is no longer just something you open in a chatbot window.

It is increasingly being embedded into the software, browsers, productivity suites, design tools, and developer platforms people already use every day. That shift matters more than benchmark charts or model branding, because it changes how technology actually feels in practice.

In 2026, the real AI story is not just about which company has the smartest model. It is about which models are quietly reshaping the tools behind modern work, creativity, and everyday computing.

Here are five AI models and platforms that help explain where that transition is heading — and why it matters for users beyond the AI enthusiast crowd.

1. Gemini 2.5 Pro and the Rise of Long-Context AI

Google’s Gemini 2.5 Pro represents one of the clearest examples of where frontier AI is moving: larger context windows, stronger multimodal understanding, and more advanced reasoning across longer documents and richer inputs.

Why it matters: Models with long-context capability can work across full reports, large research sets, long email threads, codebases, and mixed media inputs without falling apart as quickly. That makes them more useful as real assistants instead of novelty chat interfaces.

What it means for everyday tools: For most users, this shift does not show up as “I am opening Gemini 2.5 Pro.” It shows up as software getting better at summarizing pages, understanding screenshots, pulling context from multiple sources, and helping with more complicated multi-step tasks.

Why it changes the market: This is part of a broader move from simple AI features toward systems that can understand context over time. The more software can remember, compare, and reason across full workflows, the more useful it becomes in real-world productivity.

This also connects to the larger platform shift around AI PCs and memory-heavy workloads, where local processing and system architecture are becoming more important. We explored that broader hardware angle in our look at unified memory and the next wave of AI PCs.

2. Adobe Firefly and the Collapse of the “Generate Somewhere Else” Workflow

Adobe’s Firefly direction matters because it reflects a bigger trend in creative software: AI is no longer being treated as a separate tool sitting off to the side. It is being integrated directly into the editing environment itself.

Why it matters: For creators, marketers, and small businesses, that changes the workflow dramatically. Instead of generating an asset in one app, exporting it, and editing it somewhere else, the generation and editing layers are increasingly becoming part of the same process.

What it means for everyday tools: The practical effect is speed. More of the creative process can happen in place, whether that means background replacement, prompt-based edits, resizing, or generating new elements without leaving the platform.

Why it changes the market: The companies that win this next phase of AI are not just the ones with strong raw models. They are the ones that embed AI in ways that reduce friction. Firefly is important because it points toward AI becoming native to mainstream design workflows rather than an external experiment.

That is a recurring theme across the AI market right now: the biggest shift is integration, not just capability.

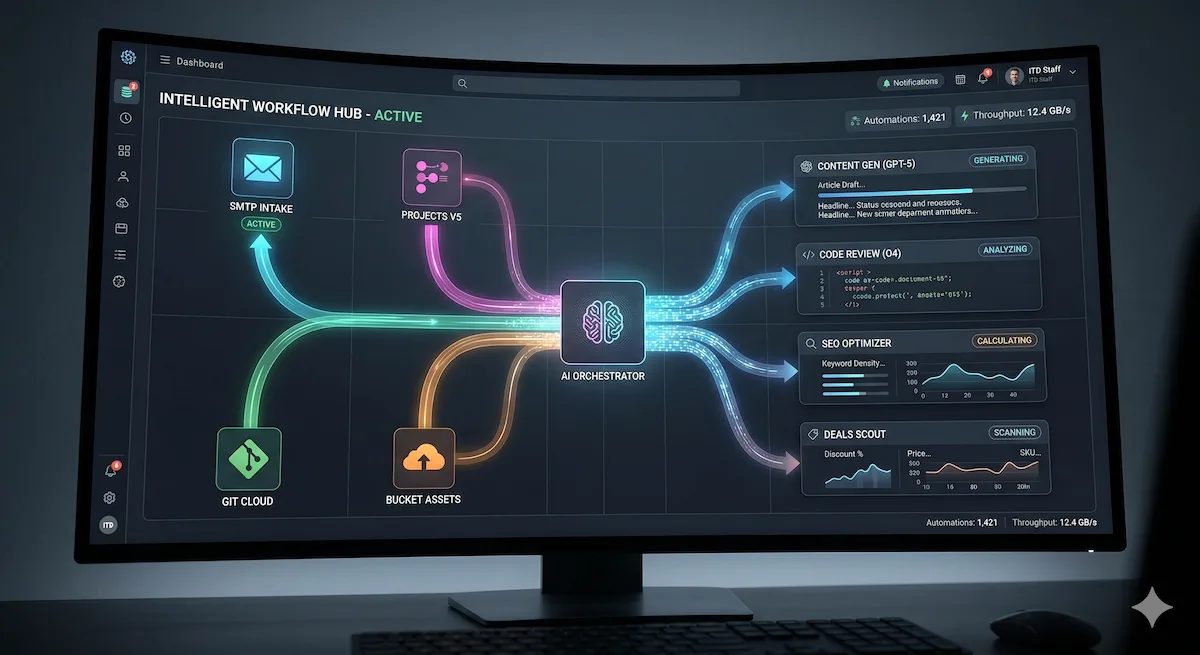

3. Microsoft Copilot Studio and the Move From Assistant to Agent

Microsoft’s Copilot push matters because it is helping define the next big AI transition: the move from passive assistance to active task execution.

Why it matters: Traditional AI helpers answer questions. Agent-style systems aim to do more than that. They are increasingly being designed to navigate apps, complete repetitive steps, and operate across software environments with less manual input.

What it means for everyday tools: In practical terms, this could mean software that helps build presentations, organize documents, populate spreadsheets, or complete workflow steps that previously required constant clicking and copy-pasting. The promise is not just faster writing, but faster completion of actual work.

Why it changes the market: This is where AI starts to alter the definition of productivity software itself. If applications become capable of acting on your behalf, the gap between “tool” and “assistant” gets much smaller.

That also has broader implications for hardware and platform competition, because more capable AI features increase pressure on vendors to support stronger local acceleration and better integration. That larger platform fight is already visible in our analysis of the AI PC chip battle between Intel, AMD, Apple, Nvidia, and Qualcomm.

4. Mistral and the Push Toward More Accessible AI Infrastructure

Mistral matters for a different reason than Google, Adobe, or Microsoft. It highlights the importance of models that make advanced AI more accessible to developers, enterprises, and smaller software builders.

Why it matters: Not every company wants to build its future around the biggest closed platforms. Stronger alternative models can lower deployment costs, expand customization options, and make it easier to build AI into products without relying entirely on the largest ecosystems.

What it means for everyday tools: End users may never know the model name behind the product they are using, but they will still feel the impact. More accessible AI infrastructure can mean better chat features, stronger document analysis, cheaper automation, and broader AI adoption across tools that would otherwise not be able to support it.

Why it changes the market: Mistral represents the idea that AI is not only becoming more powerful, but more distributed. That matters because the future of AI-enabled software will not be owned by a single company or tied to one platform category.

5. AdaptAI and the Idea of Context-Aware, Human-Aware Software

AdaptAI stands out because it points toward a more experimental but potentially important direction for AI: tools that respond not just to your commands, but to your context.

Why it matters: Instead of only reacting to text prompts, systems like this gesture toward software that can account for signals like workload, environment, or even stress. That is still early-stage territory, but it offers a preview of where proactive AI could go next.

What it means for everyday tools: In the long run, this could translate into software that knows when to simplify an interface, recommend a break, adjust notifications, or change how it presents information based on what the user is likely experiencing in the moment.

Why it changes the market: This is where AI stops being just a productivity layer and starts becoming part of the user experience itself. That has obvious upside, but it also raises questions around privacy, trust, and how much decision-making people actually want software to take over.

What These Five Models Actually Tell Us

These examples point to three larger trends that matter more than any individual model release.

AI is becoming less visible and more embedded

The most meaningful changes are increasingly happening inside products people already use, not in separate “AI destinations.” That makes the technology feel less like a novelty and more like a platform layer.

Software is shifting from reactive to proactive

The direction of travel is clear: AI is moving from answering questions toward anticipating needs, automating routine work, and carrying out more complex actions across tools.

Hardware and integration now matter as much as model quality

As AI features become more demanding and more deeply woven into everyday software, the devices underneath them matter more too. Memory capacity, local inference capability, and platform efficiency are becoming part of the user experience in a way that was easier to ignore a year ago.

Why This Matters for Regular Users

The average person does not need to memorize model names or benchmark results. What matters is whether the software they depend on is becoming faster, more useful, and more capable of reducing friction in daily tasks.

That is the real shift. AI is moving out of the “try this chatbot” phase and into the “this app now works differently” phase. In other words, the transformation is becoming less theoretical and more operational.

Final Thoughts

The next phase of AI will not be defined by which model sounds most impressive in a launch keynote. It will be defined by which systems actually improve the tools people use every day.

Gemini points toward long-context and multimodal assistance. Firefly shows how AI is collapsing creative workflows into one place. Copilot reflects the rise of agent-style productivity. Mistral highlights the growing importance of accessible model infrastructure. AdaptAI hints at a future where software becomes more context-aware and personally responsive.

Taken together, they suggest the same thing: AI is no longer just a feature. It is becoming part of the operating logic behind modern software.

Continue exploring InsightTechDaily’s coverage of AI platforms, hardware, and workflow shifts:

The AI PC Chip Battle: Intel, AMD, Apple, Nvidia, and Qualcomm Fight for the Future of Computing

Apple vs. AMD Unified Memory: What It Means for AI PCs in 2026