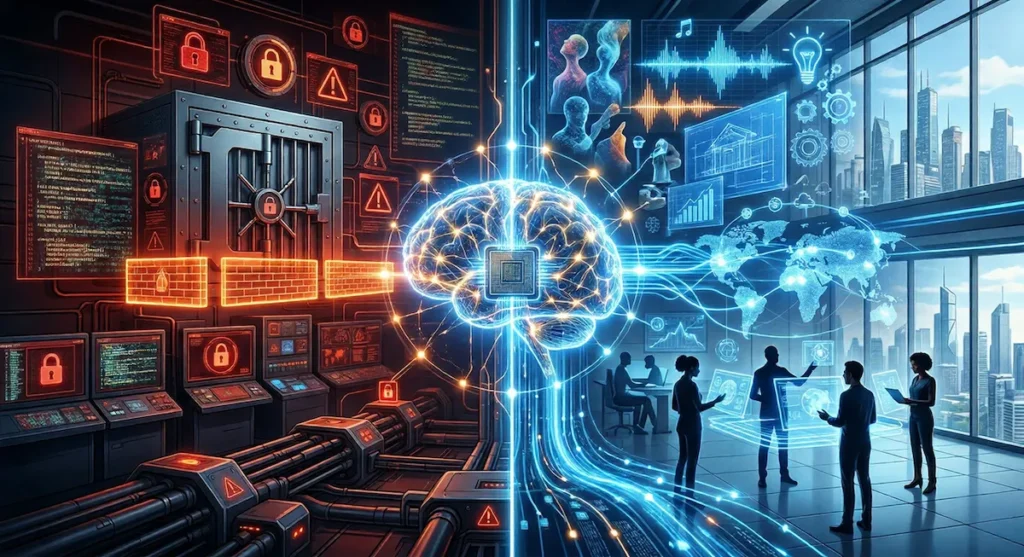

Artificial intelligence may be approaching a point where its capabilities are no longer just useful—but potentially too powerful to release freely. The debate is no longer about better chatbots or smarter assistants. It is about whether some AI systems should exist in public at all.

That question is moving closer to the center of the AI conversation after reports around Anthropic’s limited preview of Claude Mythos, a high-reasoning model reportedly tied to its Project Glasswing effort and aimed at cybersecurity research. Unlike a broad consumer assistant, this type of model appears to be positioned more like specialized infrastructure: powerful, narrow, and carefully controlled.

That makes Mythos interesting for more than just what it can do. It also forces a larger question the industry still has not answered: should higher-functioning AI models be held back as a security risk, or should they be released and allowed to reshape the world in real time while humans adapt?

Why This Debate Matters Now

For the last few years, most public AI discussion has focused on convenience. Can a model write better emails, summarize documents faster, or help create code more efficiently? But the next stage of AI may be less about convenience and more about capability concentration.

A specialized model designed for deep security work is fundamentally different from a general-purpose chatbot. Instead of answering everyday prompts, it may be able to trace vulnerabilities through large codebases, simulate attacker logic, identify chains of weakness, and surface problems that human researchers might take far longer to detect.

That creates a tension the industry can no longer avoid. A system powerful enough to help defend digital infrastructure may also be powerful enough to expose it.

The Case for Holding Back Stronger AI Models

The argument for restraint is not hard to understand.

If a model can reason through software flaws at a very high level, identify exploitable weaknesses, or accelerate vulnerability discovery, then releasing it broadly may not just increase innovation. It may also lower the barrier for bad actors. In that sense, the same AI system that can help secure the internet could also make it easier to break.

That risk is not limited to nation-states or advanced threat groups. As AI capability improves, the potential for everyday users to misuse these tools also increases. Highly capable models could make it easier to generate sophisticated phishing campaigns, automate vulnerability scanning at scale, or assist in building more effective malware. What once required specialized expertise could become far more accessible, compressing the skill gap between experienced security researchers and less experienced actors.

This is especially important in cybersecurity, where tools do not stay “defensive” by default. Techniques built for auditing can often be repurposed for intrusion. That is one reason controlled access may look increasingly attractive to labs and enterprise partners. Rather than treating the most advanced models like open consumer products, companies may choose to treat them more like sensitive infrastructure.

There is precedent for that logic. Across the broader technology sector, some of the most powerful capabilities are not fully open by design. They are gated, monitored, licensed, or restricted because misuse carries outsized consequences.

But holding back powerful AI introduces a different kind of risk—one centered on who gets access and who does not.

If only a small number of companies, institutions, or governments are allowed to operate the most advanced models, then AI capability itself becomes concentrated. That raises difficult questions.

Who decides which organizations are trusted? Is it the companies building the models, government regulators, or a small group of industry partners? And perhaps more importantly, what happens when those decisions are not transparent?

In that scenario, the concern is not just misuse—it is imbalance. Restricting powerful AI could unintentionally place transformative technology into the hands of a select few, giving them disproportionate influence over security, infrastructure, and even the pace of innovation itself.

In other words, limiting access may reduce short-term risk, but it also shifts power. And in the AI era, control over capability may become just as important as capability itself.

The Case for Releasing Them Anyway

Focusing only on the risk tells an incomplete story.

History shows that powerful technology in the hands of creative and capable people has often been a catalyst for progress, not just disruption. Tools that once looked dangerous or destabilizing frequently became the foundation for new industries, new forms of collaboration, and entirely new ways of thinking.

Artificial intelligence may follow that same pattern. When advanced systems are accessible, they do not just create risk—they expand what individuals and small teams are capable of achieving. Researchers can tackle more complex scientific problems, developers can build more sophisticated tools, and entrepreneurs can move faster with fewer resources.

There is also a broader societal impact. AI has the potential to reduce long-standing communication barriers by improving real-time translation, contextual understanding, and cross-cultural collaboration. In a world where language and access have often created divides, more capable AI systems could help bridge those gaps, making information and opportunity more widely available.

Creativity is another area where open access could have an outsized effect. Just as the internet enabled new forms of media and expression, advanced AI may unlock entirely new categories of art, storytelling, and entertainment. At the very least, it acts as an amplifier—allowing individuals to produce work that previously required large teams or significant resources.

The argument for broader release, then, is not just about speed or competition. It is about participation. When powerful tools are distributed, more people can contribute to solving difficult problems, whether in science, engineering, education, or the arts.

There is also a defensive dimension to this openness. When access is wider, adaptation happens faster. Industries evolve, security practices improve, and societies learn how to integrate new capabilities into everyday life. Restricting advanced AI may reduce short-term risk, but it could also slow the collective learning process that ultimately makes these systems safer to use.

In that sense, releasing powerful AI is not simply about letting technology run free. It is about trusting that human ingenuity, at scale, can turn capability into progress rather than just risk.

Why This Feels Bigger Than One Anthropic Model

Claude Mythos matters not just because of its reported capabilities, but because it points toward a broader shift in how frontier AI may evolve. The first wave of AI was largely about general-purpose assistance. The next wave may be about specialized high-reasoning systems built for security, research, infrastructure integrity, and mission-critical analysis.

That is a meaningful transition. It suggests the most important AI systems of the next few years may not be the ones ordinary users play with directly. They may instead be the systems operating behind the scenes, auditing platforms, strengthening enterprise defenses, and acting as force multipliers for governments and major technology companies.

In that sense, this debate overlaps with a much broader change in computing. As we have discussed in our look at the future of agentic operating systems, AI is steadily moving from being a standalone tool to becoming part of the decision-making layer of computing itself. Once AI shifts from helper to infrastructure, the question of access becomes much more serious.

Can Humans Adapt Fast Enough?

The optimistic view is that people always adapt. The uneasy view is that this time the pace may be different.

Previous technology transitions often gave institutions, regulators, industries, and users time to absorb the shock. AI may not be moving that slowly. Model capabilities, hardware acceleration, and deployment pressure are all advancing at once. That means society is not just adapting to a single product cycle. It is adapting to a fast-moving stack of changes happening together.

That challenge becomes even clearer when paired with the escalating competition around chips, infrastructure, and AI compute. As we noted in our analysis of the 2026 five-way chip war, the companies shaping the AI era are not just building smarter models. They are racing to control the hardware, platforms, and ecosystems those models depend on. The faster that race moves, the harder it becomes for slower-moving institutions to keep up.

So yes, humans can adapt. History says we usually do. But adaptation is not automatic, and it is rarely painless. It often comes after disruption, not before it.

An Emerging Divide: Public AI vs. Restricted AI

One possible outcome is that the AI market begins to split in two.

On one side, there will be public-facing models designed for broad use: assistants for writing, search, planning, coding, and media generation. On the other side, there may be tightly restricted models trained or tuned for sensitive, high-impact work such as cyber defense, vulnerability research, and infrastructure analysis.

If that happens, the biggest AI story may no longer be model size alone. It may be who gets access to the most capable systems, under what conditions, and for what purpose.

That would mark a major cultural shift. The public conversation around AI often assumes the future is about more access, more openness, and more capability reaching more people. But the Mythos-style approach hints at another possibility: the most powerful AI may become less public over time, not more.

The Bottom Line

Anthropic’s Claude Mythos preview surfaces one of the most important questions in AI right now. Not whether more powerful models are coming, because they clearly are. The real question is how they should enter the world.

Should the strongest systems be held back because the misuse risk is too high? Or should they be released more broadly, with the expectation that people, institutions, and industries will adapt the way they always have?

There is no clean answer yet. But one thing is becoming harder to ignore: the future of AI may not be defined by how powerful these systems become, but by who is allowed to use them—and who is not.